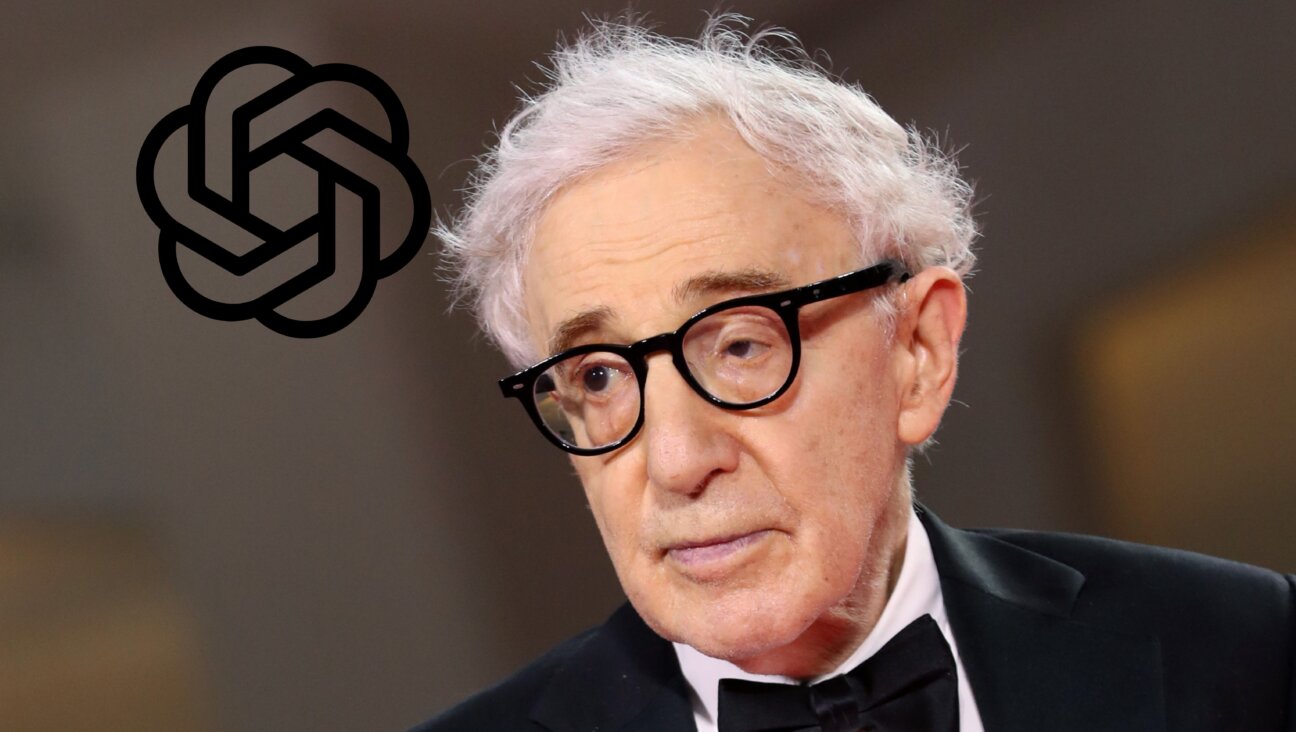

How Mark Zuckerberg and Sheryl Sandberg created history’s most dangerous golem

Sheryl Sandberg & Mark Zuckerberg Image by Getty Images

An Ugly Truth: Inside Facebook’s Battle for Domination

By Sheera Frenkel and Cecilia Kang

Harper, $29.99, 352 pages

It should be a great Jewish American success story. Perhaps the greatest. Mark, Jewish son of a psychiatrist and a dentist, has a vision of how to harness technology to connect people. Sheryl, daughter of a college French teacher and an ophthalmologist, has the people skills and business acumen to build it to a massive scale.

Soon, in one of the greatest Jewish business partnerships in American history — in world history — they have connected billions of people through their Facebook. Indeed, in many countries there is no significant distinction between Facebook and the internet. Their business is worth more than a trillion dollars, with an annual revenue of nearly $100 billion and there are more people in touch across the globe on their networks (Facebook, Instagram, WhatsApp) than were alive when either of them were born.

And yet it’s an American Tragedy.

Even before “An Ugly Truth” by Cecilia Kang and Sheera Frenkel, it was clear that Mark Zuckerberg’s online machine, grown to gigantic proportions by Sheryl Sandberg, had staggered blindly. Like a golem created by a careless yeshiva boy, it has careened out of control, its phenomenally powerful limbs serving all manner of hateful masters.

Instead of protecting the vulnerable, it has promoted genocide in Myanmar, pogroms in India and deadly anti-Vaxxer misinformation across the world as well as almost certainly tipping the 2016 American general election and the 2016 Brexit vote. In Kang and Frenkel’s lucid account we get to hear the step-by-step details of the energy and incompetence of the world’s biggest influencer and the American government’s utter inability to regulate its grotesque excesses.

The “Ugly Truth” of the title is neither particularly obvious nor a particularly dramatic reveal. It is Kang and Frenkel’s version of the “Social Dilemma.” At the end of their meticulous history of Facebook — exactly what you would expect from New York Times reporters with a track record of insight and care — they note that “the platform is built upon a fundamental, possibly irreconcilable dichotomy: its purported mission is to advance society by connecting people while also profiting off them.” As Kang and Frenkel point out, this dichotomy “is Facebook’s dilemma and its ugly truth.”

In case, like the members of Congress who grilled Zuckerberg in 2018, you don’t understand the premise of Facebook, let me explain the golem. Zuckerberg’s dream is to use technology to connect people (“It is our mission to connect everyone around the world.”) That’s a laudable dream — and one that he has come incredibly close to achieving, against all odds. There just happen to be two related core drawbacks in this ugly truth. First, how to fund the dream? Second, what rules should apply to the connections between people?

By connecting people within its closed system, every detail of your attention, intention and proclivity is measured. Plus, as has been well established elsewhere, Facebook spies on you far beyond its own walls So, on top of the information you willingly surrender — name, date of birth, location, education, favorite sports teams — Facebook learns more about you and your habits than you probably know yourself.

Generally speaking, Facebook doesn’t share people’s information; rather, it makes money from people who pay Facebook to change your mind. To call these people advertisers is not wrong, but it’s an invitation to anachronistic thinking. Don’t imagine a static billboard selling Coca-Cola to anyone who passes. The people who promote their ideas on Facebook can tailor their messages to reach specific groups whilst being invisible to others, and with specific measurable metrics of impact. I know who watched my video about New York bagels, for how long and how many people clicked through to buy New York bagels. I can exactly tailor my advertising to nudge people who might be susceptible a little further into thinking that we oughta do something about the Jews who run the country.

But, bigotry to one side for a second, we’re all promoting something. The distinction between paying promoters and someone with a Facebook page or a Facebook group is mainly one of guaranteed reach. If I want people to read my joke from my Facebook page, I’ll tag some friends and post it in groups who I think will like it. It might be read by a few hundred or thousand people. But if I pay to promote it, I can guarantee what kinds of people will see it and, roughly how many. And what can paying and other promoters say on Facebook? Well, that’s been a nearly 20-year journey from “anything” to “nearly anything.”

As of 2019, lies are fine, especially if you are a politician. Hate and most types of misinformation are theoretically not fine, but are practically tolerated. Facebook mostly refuses to take responsibility for moderation, i.e. checking for lies and hate, but even after they have been reported by users, hundreds of white supremacist and antisemitic Facebook sites remain, in contravention of the private company’s own “Community Standards.”

This year I’ve been working with the Center for Countering Digital Hate to stop anti-Vaxx misinformation fatally proliferating on social media. Misinformation about vaccines costs lives so we notified Facebook about the problem in June 2020 — but after 12 months of the global pandemic the anti-Vaxxers had actually grown their reach on Facebook. Because bans on copyright infringement and child pornography are rigidly enforced, we know it’s possible to police for moral or financial reasons, Facebook management just chooses not to police incitement to hate or harm.

“An Ugly Truth” shows the repeated failures of a management team and structure to comprehend or care about the harm they are causing in the world through the promotion of hate and misinformation. Incidentally, it shows a society that is currently incapable of holding Facebook meaningfully accountable for its philosophy of moving fast and breaking things. The Facebook content moderation team set itself the lowest bar possible and, arguably, continues to fail to reach that standard.

“The team originally sat under the operations department,” the authors write. “When Dave Willner joined in 2008 it was a small group of anywhere between six and twelve people who added to the running list of content the platform had banned. The list was straightforward (no nudity, no terrorism), but it included no explanation for how the team came to their decisions. ‘If you ran into something weird you hadn’t seen before, the list didn’t tell you what to do. There was policy but no underlying theology,’ said Willner. ‘One of our objectives was: Don’t be a radio for a future Hitler.’”

As well as the problems that are a feature of the platform — privacy intrusion and propaganda — Kang and Frenkel show the company demonstrating two other significant problems, namely infection and security. Even though Facebook already has the personal details of billions in its servers, its main concern is growth. More people must spend more time on Facebook no matter what. With the News Feeds that first arrived in 2006, Facebook took over from users the decision of what you would see on the platform and watched your behavior. It developed an algorithm that would feed you more of what kept you on the site longest, irrespective of truth or helpfulness. In fact, the authors record a Facebook experiment that found optimizing News Feeds for bad news kept users on site for longer than for good news.

Zuckerberg and Sandberg are evangelical connectors. They believe in people and they believe in the righteousness of their mission to connect people. If they connect everyone, they will have won and good things will happen automatically. They believe. Unfortunately, the history of their platform — the history of humankind, in fact — is a salutary reminder that connection is more closely related to contagion than bliss. With Facebook optimizing for engagement not usefulness what appears in everyone’s News Feed, Mark and Sheryl’s great connecting machine gives freedom of reach to every malign actor and every bigoted idea that comes along. And, as we know, if you connect everyone to Joseph Goebbels or Patient Zero with no filters you are generating genocide, promoting pandemic.

As well as contagion, another casualty of prioritizing growth is security. We don’t know that anyone has ever directly hacked into Facebook’s servers, but the provision of information to Cambridge Analytica through Facebook’s Open Graph program is probably the most consequential technological leak in history. From 2014, Trump and Brexit lobbyists were able to use private information from about 87 million Facebook users, most of them in the United States. Alex Stamos, who was head of Facebook security from 2016-2017 did his best to tighten measures, but left the summer after the 2016 election because six months of him warning about Russian and fake news gaming the Facebook promotional system had gone unheeded. In a nutshell, it wasn’t a priority for Zuckerberg.

There are other significant protagonists in the book — notably Joel Kaplan, the conservative lobbyist who sat behind Brett Kavanaugh after he was accused of rape by Christine Blasey Ford and whose governmental strategies have been central to Facebook’s political message since 2011 — but the book cover carries photos of Zuckerberg and Sandberg for a reason. Their relationship is not without its problems and Zuckerberg is clearly the boss, but they are the axis upon which Facebook revolves.

Zuckerberg is easier to grasp, and Facebook’s weaknesses are Zuckerberg’s weaknesses. He’s a coder of excellence and a leader with technological vision. His upbringing in tony White Plains, New York, going to Phillips Exeter Academy and Harvard may have included a “Star Wars” bar mitzvah but didn’t bring him any real experience of antisemitism or any sense that the Force could be used for evil. His entire life has evinced a complete lack of social care from his early days as a programmer at Harvard, coding a site for visitors to choose which girls were hotter, through to stating that he would not take Holocaust denial down from Facebook because “there are things that different people get wrong” and running a company whose U.S. workforce was only 3.8% Black – 5 years after bringing Maxine Williams on as a Chief Diversity Officer and promising to make diversity a priority.

Kang and Frenkel do not speculate about Zuckerberg’s “Only connect” obsession being compensatory for his own inabilities, but they do bear testimony that Zuckerberg is obsessed with connecting people while he himself is socially awkward. One method of listening he uses is to stare intently at the speaker with his eyes barely blinking. But he doesn’t seem to hear. Stamos’s serious warnings were ignored for months when Facebook’s timely action could have preserved democracy. Months into briefings about the genocide it was automatically promoting, Facebook still only had one Burmese language specialist for a country with scores of languages.

So, he’s a prodigy and a tech leader but a severely limited political and cultural thinker. Easily swayed by the facile libertarian arguments of Facebook board member Peter Thiel and unwilling to stand up for his or his wife’s beliefs in medical science or immigration, Zuckerberg cannot understand why he’s not regarded as a visionary like Steve Jobs or a philanthropist like Bill Gates, despite the incalculable harm he’s caused.

Harder to understand is Sheryl Sandberg. Yes, she’s built the two biggest advertising machines in human history at Google and Facebook, but she was brought up in a family that went to Soviet Jewry rallies, committed to service and feminism. Indeed, she was able to learn from her mistakes in “Lean In” and, also after suffering the tragedy of her husband’s death, wrote “Option B” to address the blind privilege she showed in that earlier, important book. And yet, at best, she’s been an accessory to the greatest promoter of hate in human history; at worst, she’s been complicit in genocide and the destruction of democracy.

“An Ugly Truth” is brisk but depressing. At every step, Facebook expands, harm is caused, the platform denies harm, expresses optimism, issues a mealy-mouthed apology and, in some cases incurs a trivial punishment. Zuckerberg has repeatedly condoned practices that mine “seam lines” of divisive disagreement in American society for profit at the expense of democracy.

“The root of the disinformation problem, of course, lay in the technology,” the authors state. “Facebook was designed to throw gas on the fire of any speech that invoked an emotion, even if it was hateful speech — its algorithms favored sensationalism.”

From the beginning, “Content policy was far down on the company’s list of priorities.” As Ethan Zuckerman, creator of the pop-up ad tells the authors, “Facbook is an incredibly important platform for civil life, but the company is not optimized for civil life…. It is optimized for hoovering data and making profits.”

But, as Facebook has grown, it has so far been beyond either the understanding of legislators or, now, perhaps – with more people and more money than most sovereign countries — beyond the reach of legislative control. Talking about Jeff Chester, a privacy advocate, and other consumer advocates, Kang and Frenkel note that it is “harder to get government approval for a radio license in rural Montana or to introduce a new infant toy… than to create a social network for a quarter of the world’s population.”

The harms were baked into the design. As Dipayan Ghosh, a former Facebook privacy expert noted, “We have a set of ethical red lines in society, but when you have a machine that prioritizes engagement, it will always be incentivized to cross those lines.”

You are surely a friend of the Forward if you’re reading this. And so it’s with excitement and awe — of all that the Forward is, was, and will be — that I introduce myself to you as the Forward’s newest editor-in-chief.

And what a time to step into the leadership of this storied Jewish institution! For 129 years, the Forward has shaped and told the American Jewish story. I’m stepping in at an intense time for Jews the world over. We urgently need the Forward’s courageous, unflinching journalism — not only as a source of reliable information, but to provide inspiration, healing and hope.

, editor-in-chief

, editor-in-chief