Groups baselessly blame Biden for Delaware Chabad arson

NOV. 10, 2020 • 7 DAYS AFTER THE ELECTION

Welcome to FAHRENHEIT 411, a special newsletter on disinformation and conspiracy theories surrounding the 2020 presidential election. It is produced in partnership with the Institute for Strategic Dialogue, a London-based think tank that studies extremism, and written by Molly Boigon, an investigative reporter at The Forward. Let us know what you think: [email protected]

THE 411: GROUPS BASELESSLY BLAME BIDEN FOR DELAWARE CHABAD ARSON

Hate communities on Facebook, Twitter, YouTube and Reddit shared an article about an arson attack at a Delaware Chabad center nearly 70 times during the week ending Nov. 7, baselessly linking the attack to former Vice President Joe Biden, who grew up in Delaware.

The article, from the website of the anti-Muslim activist Pamela Geller, was was a word-for-word reprint of a piece from the right-wing Jewish news site Algemeiner, but Geller’s version added a note about the arson having taken place in “Biden’s state.”

The shares of Geller’s article were part of 2,599 total posts, comments and tweets by hate actors discussing the Jewish community during election week, according to ISD.

Commenters wrote of the article that Jews “continue to vote for the party that hates them” and asked where the “convenient non-practicing Jew [sic] Schumer and Sanders” were. Others baselessly said Biden supporters were responsible for the attack.

The attack on the Chabad Center for Jewish Life in Wilmington in November was the second on a Chabad center in three months in Delaware. In August, arsonists lit on fire the Chabad Center for Jewish Life at the University of Delaware in Newark.

The effort to link the Democratic Party to antisemites has been present throughout the 2020 campaign, including after an August primary victory for U.S. Rep. Ilhan Omar, the Minnesota Democrat who has been accused of antisemitism due to some of her comments about Israel, including one mentioning the pro-Israel community’s “allegiance to a foreign country.” Similar discussions followed during demonstrations in Orthodox areas of Brooklyn last month to protest closures of schools and businesses amid a coronavirus spike.

Last week’s Geller posts also echo an effort by President Donald Trump and other Republicans to paint civil unrest as part of “Joe Biden’s America,” generally by exaggerating the violence connected to Black Lives Matter protests against police brutality.

Geller wrote earlier this month that Biden and his running mate, Sen. Kamala Harris of California, “have the Nazi vote,” and in October that Black Lives Matter protesters were part of an “antisemitic terrorist group,” that Democrats are “getting rid of the Jews,” and that Jewish Democrats “serve their nazi-inaspired [sic] masters like Ilhan Omar.”

The Southern Poverty Law Center described Geller as “the anti-Muslim movement’s most visible and flamboyant figurehead.” She is best known for her 2010 leadership of the public opposition to a 13-story mosque and community center in Lower Manhattan two blocks from the former site of the World Trade Center in 2010.

Her organization, Stop Islamization of America, was responsible for controversial ads on public transport in New York and other cities as recently as 2017 meant to discourage people from practicing Islam. One from 2015 said “Killing Jews is Worship that draws us closer to Allah.”

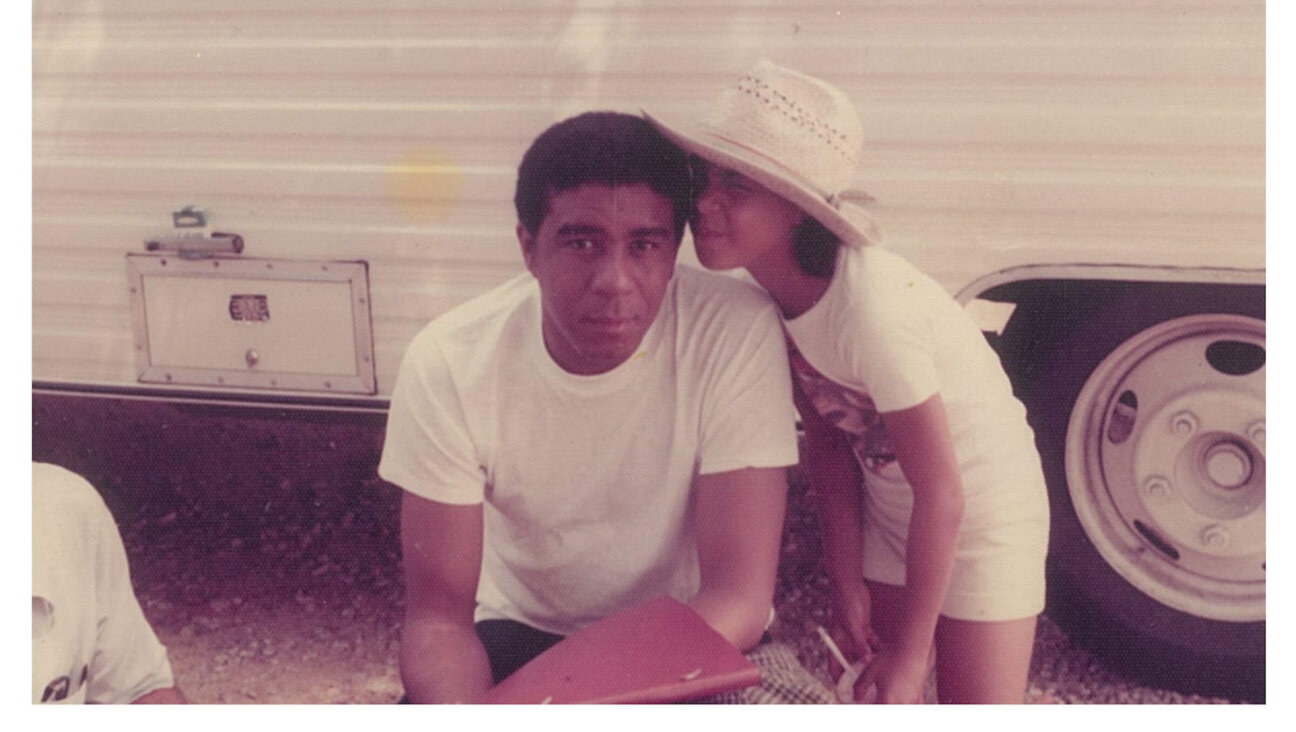

Daniel Rogers Image by Molly Boigon

Danny Rogers is the co-founder of the Global Disinformation Index, a British firm that works to defund disinformation sites. Rogers also teaches at New York University and is a fellow at the Truman Project on National Security. This interview has been lightly edited for length and clarity.

Boigon: GDI has been doing a lot of research on how brands and institutions are inadvertently funding disinformation when their ads are placed on sites. How does this work?

Rogers: Ad support to media had always been incidental. You create content, you get it out there and then you monetize it.

Now, what we’ve seen is the perversion of that equation, where the content itself is being manipulated and adapted and curated in order to purely maximize the attention and thus squeeze every ad dollar out.

So many of these threat actors, including even some of the state threat actors, are either primarily or very nearly primarily motivated by the profit incentive created by this ad tech ecosystem.

Boigon: Do brands know that they are placing ads on disinformation sites funded by foreign and domestic bad actors? Rogers: I think the thing that people don’t understand is that when you see a Ford Motors or whatever advertisement on a page that you’re visiting, rarely, if ever, has that brand explicitly stated, “We would like to show up on that page.”

So much of open-web ad machinery is what’s called your “real-time bit programmatically-placed program advertisements,” meaning that the advertiser has indicated you have certain characteristics — demographic, behavioral or otherwise — that they are targeting, and the tracking mechanisms of the internet identify you as such, and Google places that ad in front of you wherever you go on the internet.

That’s why you’ll see similar ads following you around —not because brands are explicitly endorsing the content, but because of the way that ad tech is plugged, the exchange is funneling money to whichever site happens to be displaying that ad.

Boigon: What is the solution here? Is it regulatory?

Rogers: All of the data that’s being shared to enable the precise targeting of advertisements is, in and of itself, a pretty massive privacy invasion.

But I think the biggest answer to your question is that the brands themselves don’t want this. The brands themselves are particularly terrified of this nightmarish brand safety scenario where, say, a Pfizer ad is showing up along anti-vaxx content.

Because of the monopolistic nature of the ad industry, the dominant ad exchange, Google, has little to no incentive to actually offer brands the definitive ability to make that selection, and in fact, it’s actually against Google’s interest. Once the brands have that level of control, they can choose not to place ads in places — which means, very frankly, that Google makes less money.

Part of the regulatory solution is this antitrust stuff that’s happening now, because the brands are relatively powerless. They can’t really go anywhere else for programmatic ads because the space is so dominated by a few massive players.

Boigon: How much money goes to disinformation sites from brand advertisements being placed by ad tech companies?

Rogers: We did a study last year that tried to put a lower bound on the estimate of how much money we’re talking industry-wide, and our estimate is that it’s at least, but probably significantly more than, a quarter billion dollars a year.

DISINFORMATION ROUNDUP Molly reads — and writes — a lot about disinformation so you don’t have to. Here are three articles from other sources and three from the Forward worth a look.

‘Stop the Steal’ supporters, restrained by Facebook, turn to Parler to peddle false election claims

“The app, which has a free-speech doctrine and has become a haven for groups and individuals kicked off Facebook, experienced its largest number of single-day downloads on Nov. 8, when about 636,000 people installed it, according to market research firm Sensor Tower.” Read more.

YouTube election loophole lets some false Trump-Win videos spread

“’YouTube saw the inevitable writing on the wall that its platform would be used to spread false claims of election victory and it shrugged,’” said Evelyn Douek, a lecturer at Harvard Law School who studies content moderation and the regulation of online speech. Read more.

Barr authorizes Justice to probe any ‘substantial allegations’ of voter fraud

“The head of voter fraud investigations at the department, Richard Pilger, stepped down from his post hours after Barr’s announcement.” Read more.

The Forward’s coverage of disinformation and the election

We explored Georgia’s election-weary Jewish community, wrote about QAnon’s role in the Republican Party and covered Jewish groups’ responses to Trump’s false claims of victory.

HATE TRACKERS, WEEK ENDING NOV. 7

ISD has developed a tracker that scrapes social-media platforms for hateful posts, then has researchers review each post, categorize hateful users based on their ideological motivations and use keywords to examine their conversations. To read more about ISD’s methodology, click here, and to see its full weekly report, click here.

This week, ISD tracked the posts through Nov. 7, when the election was called.

Image by Molly Boigon

Top 5 Changes in Online Communities:

-

125% in activity among far-right communities

-

27% in activity among anti-Muslim communities

-

61% in activity among anti-migrant communities

-

20% in activity among misogynistic communities

-

71% in activity among anti-LGBTQ+ communities Thanks for reading this edition of FAHRENHEIT 411. Let us know what you think: [email protected].

Hello, fellow Forward reader! I’m Joel Brown, a Forward reader and supporter for more than 15 years, and currently the chair of the board of directors.

I’m an avid Forward reader because it ticks so many of my essential boxes: excellent journalism, Jewish focus and diverse viewpoints. In today’s political climate, what I most appreciate is the Forward’s independence — made possible by the generosity of its membership.

The Forward is committed to bringing you unbiased, nuanced Jewish news. From my position as board chair, I see an exciting future as we expand our position as the definitive independent voice of contemporary American Judaism.

— Joel Brown, Forward board chair