‘They don’t care’: Why it’s so hard to take antisemitic websites off the internet

The site the Buffalo gunman credited with teaching him about white supremacy is resistant to regulation, as are similar ‘alt-tech’ platforms

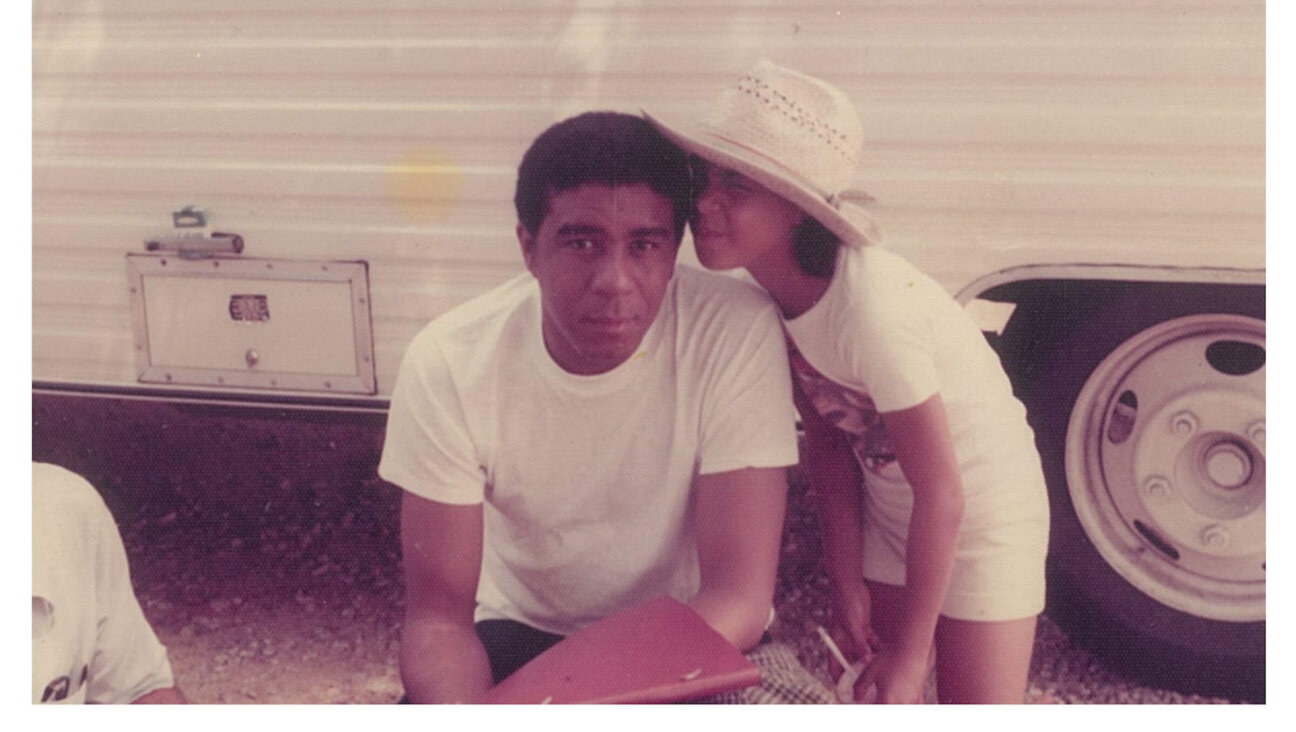

People light candles at a makeshift memorial near a Tops Grocery store in Buffalo, New York, the day after a gunman shot dead 10 people. The alleged shooter appears to have used 4chan, an online forum, to discuss his racist and antisemitic ideology. Photo by Getty Images

After a gunman murdered 10 African Americans at a Buffalo grocery store Saturday, two of the social media websites the alleged killer used to plan and promote the attack — Twitch and Discord — announced quick action in response.

The 18-year-old suspect identified by police appeared to have used Twitch to livestream a video of the shooting as it took place. The company, which is owned by Amazon, announced that it had removed it within two minutes. Discord, a platform for private chat rooms that the suspect reportedly used to discuss his antisemitism and desire to kill minorities, said it was investigating his use of the software.

Major social media companies have been under increasing pressure in recent years to crack down on people using their websites to promote hate speech and extremist politics. The Anti-Defamation League, for example, has clashed repeatedly with Facebook over its content moderation policies and Twitter has been embroiled in controversy over its decision to ban some far-right politicians from the website.

But many of the racist extremists who carried out violent attacks in recent years were far more active on “alt-tech” websites like 4chan, Kiwi Farms and BitChute, which face relatively little public scrutiny. Antisemitism is a fixture on almost all of these corners of the internet, ranging from Holocaust jokes to the theories of Jewish control that appear to have animated the Buffalo shooter.

Daniel Kelley, associate director of the ADL’s Center for Technology and Society, said that unlike Facebook, Twitter or YouTube — which insist they want to crackdown on extremism — these alternative social media platforms are shameless and immune from pressure.

“There’s no sense in exerting public pressure because they don’t care,” Kelley said. “They don’t want to be good public actors.”

The Buffalo suspect was active on 4chan, an online message board website notorious for its bigotry that has long resisted moderation or regulation. While 4chan was founded in 2003, it is part of an online ecosystem that has boomed in recent years as users kicked off of mainstream platforms have started new websites meant to offer the same services with fewer rules.

Kiwi Farms, an online forum used by the 2019 New Zealand mosque shooter, is known for launching targeted harassment campaigns that are banned on mainstream websites. BitChute hosts the kind of far-right videos prohibited on YouTube, while DLive offers livestreaming to neo-Nazis and other extremists blocked by Twitch.

“Screw your optics, I’m going in,” the man who killed 11 people at the Tree of Life synagogue in Pittsburgh wrote on Gab, a far-right Twitter alternative, shortly before the 2019 attack.

One way to address platforms whose owners take pride in an “anything goes” approach to content is to pressure the companies that provide their internet infrastructure. After it was revealed that three racist mass shooters in 2019 — the attackers of the New Zealand mosque, Chabad of Poway and an El Paso Walmart — had all regularly posted on 8chan, which is similar to 4chan, several of the third-party services that kept the website online stopped working with the company.

Cloudflare, which shields websites from cyberattacks, stopped protecting 8chan, and Voxility, which owned 8chan’s servers, where its data is stored, also terminated its relationship to the site.

“8chan ultimately survived,” according to an analysis last year by American University’s Tech, Law and Security Program, which worked with the ADL, “though the reach of its content and its accessibility to potential new users have been restricted by mainstream service providers’ refusal to associate with the site.”

Kelley said 8chan’s deplatforming can serve as a model for the service providers still working with 4chan and other platforms that offer online communities to violent extremists. He said the ADL was encouraging Cloudflare to set standards for which websites it will offer protection to, but that the company has generally insisted on a content-neutral approach.

“Businesses need to take a stand around who subscribes to their services,” Kelley said.

What is ‘extremist’?

But encouraging internet companies to blacklist certain websites poses censorship concerns, as it effectively removes them from the internet altogether, a more far-reaching sanction than banning a single user from a platform like Twitter or Facebook.

Refusing to work with companies that host illegal content like child pornography, as 8chan was accused of doing, or that are being used to plan mass shootings, is relatively straightforward. But banning websites for extremism is a more fraught concept because there is little agreement on the definition.

Jonathan Greenblatt, the ADL’s chief executive, provoked a furious response from progressives after he delivered a speech earlier this month in which he placed pro-Palestinian groups like Jewish Voice for Peace and the Council on American Islamic Relations “in the same category as right-wing extremists.”

Kelley declined to say whether the policy the ADL wants companies like Cloudflare to adopt — banning “extremists” from using their services — would apply to a website hosted by, for example, Students for Justice in Palestine.

Ben Lorber, an analyst at the left-wing Political Research Associations, cautioned that online content moderation can only treat a symptom caused by the wider rise in violent white supremacist ideology.

He emphasized the need to directly address white nationalism and the Great Replacement theory, a racist conspiracy espoused by the Buffalo shooter.

“An approach which entrusts technocrats and mega-corporations to control the contours of public discourse ends up disempowering civil society, and, depending on who gets to define what is considered ‘extremist’ speech, could be used to silence progressive voices on issues of vital public concern,” Lorber said.

There is also a degree of whack-a-mole when it comes to pushing websites like 4chan off the internet, as far-right users are often able to move to new websites. But Kelley said that eliminating some of the most dangerous online forums is often more effective than banning a user from a single website like Twitter.

“The effort it takes to bring a website back online is several magnitudes harder than it is for someone to go from one to another,” he said.

Hello, fellow Forward reader! I’m Joel Brown, a Forward reader and supporter for more than 15 years, and currently the chair of the board of directors.

I’m an avid Forward reader because it ticks so many of my essential boxes: excellent journalism, Jewish focus and diverse viewpoints. In today’s political climate, what I most appreciate is the Forward’s independence — made possible by the generosity of its membership.

The Forward is committed to bringing you unbiased, nuanced Jewish news. From my position as board chair, I see an exciting future as we expand our position as the definitive independent voice of contemporary American Judaism.

— Joel Brown, Forward board chair